A performance analysis of the parallel raytracing algorithm for the Blue Gene/L.

Download: Paper (PDF)

Objective

To test the performance of raytracing, using the Message Passing Interface (MPI) library for the Blue Gene/L to generate a PPM image.

Description

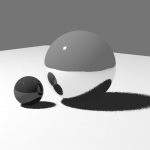

Ray tracing, a graphic rendering method that uses rays from the camera to detect color and reflections, is one of the many ways to create a photo-realistic scenary with reflective surfaces. As part of a quest to render more realistic graphics faster, we’ve attempted to parallelize the algorithm for use in super-computers, using the 3-D model on the right.

Results

We’ve ran the algorithm under various conditions. In all cases, our raytracer ran faster than the single-threaded version on a typical computer.

When increasing the number of processors, we’ve found that our algorithm increased in performance. In fact, the time it took to render the same image decreased logarithmically.

When we increased the maximum number of reflections (bounces), our algorithm slowed exponentially. This is expected, as reflections are actually a recursive call to retrieve the next color.

Interestingly, we’ve found a bizarre behavior when increasing the number of pixels to render. While our algorithm’s efficiency slowed linearly for the most part, there was a huge jump in performance when we rendered 2560000 pixels. We believe this anomaly is due to the number of pixels being a mutliple of 2.

Extensions

For artistic taste, we’ve also added in a few filtering methods, gray-shading and limiting shades, which are calculated after cumulating all pixel values.

When we first attempted to render a gray-shaded image, we naïvely gave the red, green, and blue values equal weights to calculate the gamma (a 0-255 gray value). This gave us an obnoxiously bright image.

Since each color contributes to light differently, the weight values had to be adjusted to reflect this nature. In this image, we gave red a weight of 0.299, green as 0.587, and blue with 0.114.

Our attempt at limiting color shade works by “bucketing” a certain range of red, green, and blue. For example, to limit the red color to 3 shades, we create 3 buckets, where the first bucket sets the values from 0 to 85 to black (0); the second, from 85 to 170 to mid-red (128); and the last, from 170 to 255 to red (255).